Latest News

INTREPID Project Concludes with Successful 3rd Pilot

Dr. Nicholas Vretos, Technical and Scientific Coordinator of the project, represented the Visual Computation Lab in this event.

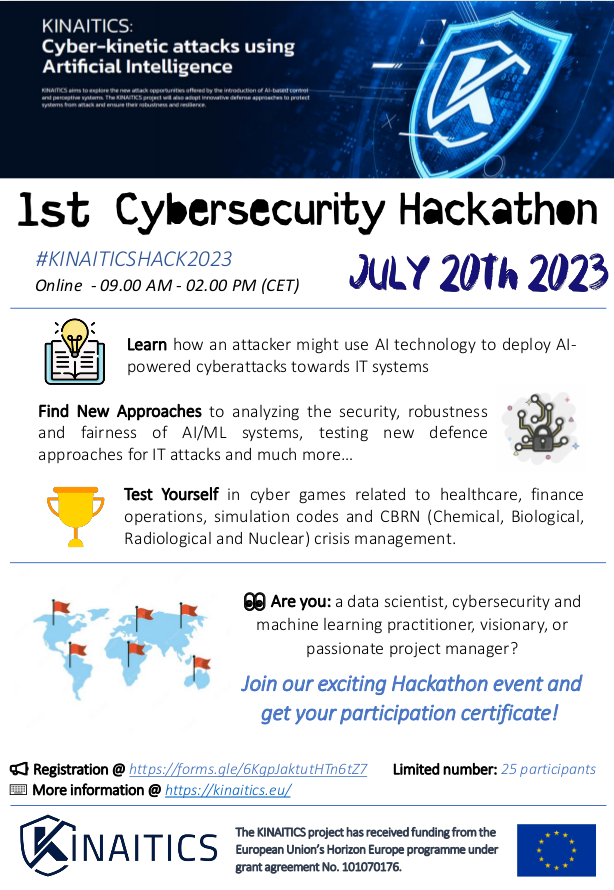

... Read MoreAnnouncing Our Hackathon Event: Dive into the World of AI Security

Are you fascinated by the cutting-edge world of artificial intelligence? Are you eager to explore the intricate realm of AI security? Join Our Exciting Hackathon Event! Prepare yourself for an insi ...

Read MorePublication on CORDIS Reveals Groundbreaking Research in Personalized Nutrition

An article featuring our groundbreaking research in personalized nutrition has been published on CORDIS, the official website of the European Commission's Community Research and Development Informa ...

Read MoreNew Chemical Compounds Provide Protection Against SARS-CoV-2

The interdisciplinary team at CERTH has developed two new chemical compounds that protect against SARS-CoV-2, the virus responsible for Covid-19. By screening a library of 500,000 chemical substanc ...

Read MoreA new EU - PRIMA project just started: "SWITCHtoHEALTHY" Switching Mediterranean consumers to Mediterranean sustainable healthy dietary patterns.

SWITCHtoHEALTHY project started on 1st April 2022 as part of the PRIMA Program funded by the European Union under the Grant Agreement number 2133 - Call 2021 Section Agrofood IA, coordinated by ENC ...

Read More